The Complete Guide to Empathetic Marketing

Successful empathetic marketing is about connecting your audience and your brand. That doesn’t mean just throwing ads at your audience. It means creating truly valuable assets — content that serves customers’ needs and addresses their most significant pain points.

This type of content is much easier to create when it’s informed and driven by empathy. When you put yourself in your customers’ shoes, you can more easily acknowledge struggles and think critically about the best solutions.

Below, let’s go over why empathetic marketing is such a powerful strategy for businesses of all types and sizes, tips for infusing more empathy into your marketing, and a few real-life examples of empathetic marketing in practice.

Table of Contents

The Benefits of Empathetic Marketing

As Dr. Brené Brown notes, “Empathy is feeling with people.”

Showing empathy in your marketing helps build trust between your brand and your customers. And during a time when more consumers are losing confidence in brands, brand trust is a major win if you can achieve it.

A 2022 PwC survey found that only 30% of consumers have a high level of trust in companies.

If you can get on the other side, however, you may be on your way to becoming one of the most trusted brands by consumers.

All it takes is a more insightful perspective on where your customer is coming from, their needs, and how your brand can help them meet their goals.

Tips for Empathetic Marketing

You know you want to infuse more empathy into your marketing, but how exactly can you do that? Here are the best tips to remember if you want to be an empathetic marketer.

Put the customer at the forefront.

Empathetic marketing starts and ends with your customer, so it only makes sense to put their wants and needs at the forefront.

Empathy is about understanding something from another’s perspective by seeing something through their eyes. To empathize with customers, imagine their experience with your brand. Look at your product or service from their viewpoint, and think about each step they may take.

Better yet, you can follow real-life customer journeys to see their actions when shopping on your site or digesting your content.

To truly understand your customers’ experiences with your brand, take time to dive into each step of their journey so you can better understand what they may want or need during each stage.

Be open to feedback.

Operating in a vacuum is easy because that’s how they’ve always been done. But to truly practice empathy in your marketing, you have to bring your customers into the planning aspect so you can hear directly from them.

They can share what they want to see from your brand or what should be changed.

To collect feedback from your audience, go directly to the source. Run a survey or host a focus group to learn exactly what your customers’ challenges are, what they need, and how they view your brand.

These insights can help you better understand how your product or service plays a role in helping your customers navigate their challenges or achieve their goals.

Your customers will tell you if the messaging doesn’t land. Be open to shifting your approach if that’s what it takes for your message to resonate.

Always be listening.

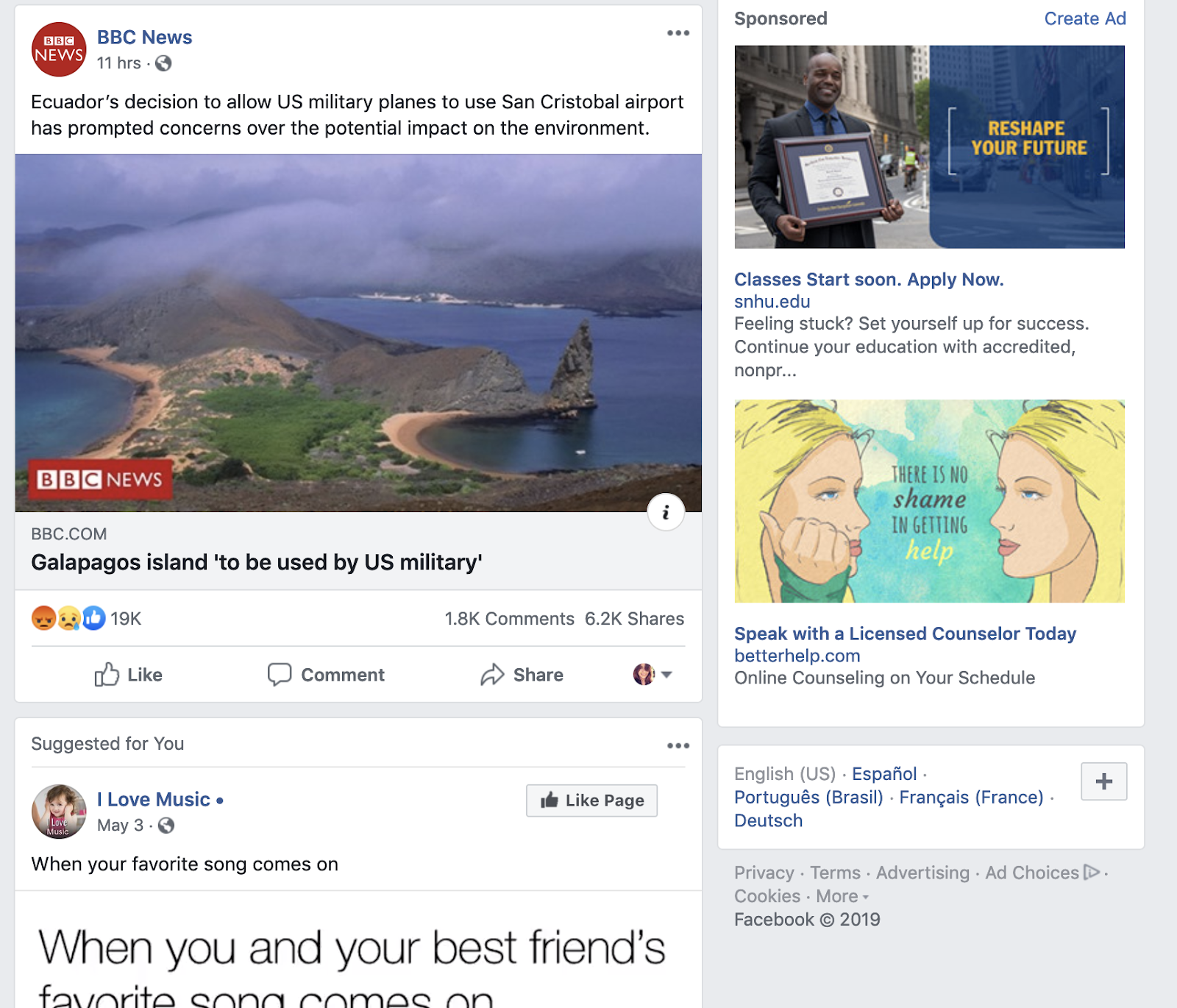

While you should always collect direct feedback from your customers and audience, gathering insights that they don’t personally share with you is essential. People tend to be more honest when they aren’t talking directly to a brand or think the brand won’t see their comments.

Pay attention to the overall sentiment when your brand is mentioned online to see the general feelings towards your company, whether positive or negative.

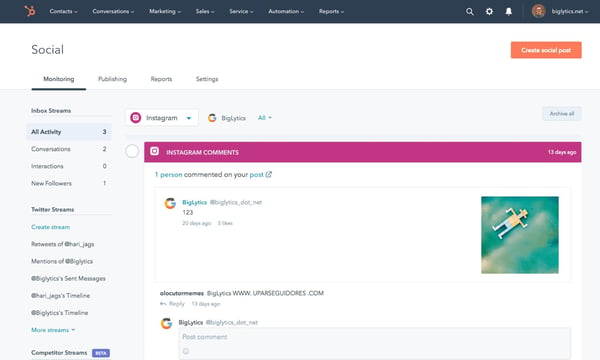

Tune into your customers’ conversations, the feedback they’re sharing about their experience, and their general sentiment about your brand. You can do this by monitoring social media comments, checking out reviews on your site, or tracking reviews on third-party sites.

Be genuine.

Understanding your audience and their various needs is essential to empathetic marketing. The last thing you want is to break their trust. Being fake or putting on a persona is the quickest way to do that.

Whenever you share content or conduct outreach, be genuine in your approach. Transparency goes a long way in being authentic, so always lead with empathy if you want your content or messaging to resonate.

Provide your customer with the right content.

After all of the listening and empathizing you’ve done, it would be a shame not to put that learning into practice. And yet, some brands continue to share content their audience isn’t interested in. This is the last thing you want to do.

If you want your marketing approach to resonate with your customers, delivering the content you promised them is essential.

After running surveys or focus groups, explore how you can adjust your product, messaging, or communication channels to better meet the needs of your most loyal customers.

Empathetic Marketing Examples

Now that you know what empathetic marketing is and how to incorporate it into your strategy, let’s walk through eight brands that nail empathetic content marketing across various media.

LUSH

With the tagline, “Fresh, handmade cosmetics,” LUSH is a beauty brand that is all about natural products.

As such, we see its radical transparency in the “How It’s Made“ video series, where LUSH goes behind the scenes of some of its most popular products.

Each episode features actual LUSH employees in the “kitchen,” narrating how the products are made. Lush visuals (pun intended) showcase just how natural the ingredients are.

You see mounds of fresh fruits, tea infusions, and salt swirled together to become the product you know and love. It’s equal parts interesting and educational.

Why This Works

LUSH customers want to buy beauty products that are truly natural. They care about using fresh, organic, and ethically sourced ingredients — hence why the videos feature colorful, close-up shots of freshly-squeezed pineapple and jackfruit juices to drive that point home.

Taking customers inside the factory and showing them every part of the process — with a human face — assures them that they can consume these products with peace of mind.

LinkedIn Talent Solutions provides HR professionals the tools they need to improve recruitment, employee engagement, and career development practices within their organization.

LinkedIn Talent creates helpful content on a dedicated blog to supplement these tools. The blog offers tips that address the challenges of the talent industry. LinkedIn also develops reports offering deeper insight into different industry sectors, such as this Workplace Learning Report.

Why This Works

One effective empathy marketing tactic is education. LinkedIn wants to empower its audience to do work and hire better (and use its product to do so).

This report is just one tool that offers its audience deeper insight into the industry while positioning the brand as a powerful resource.

Through offerings like this, customers learn that they can rely on LinkedIn as a trusted source to guide them in the right direction, and LinkedIn can continue to provide solutions through its product offerings. It’s a win-win all around.

The Home Depot

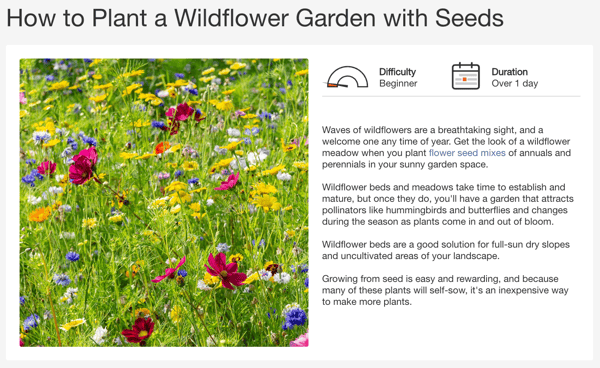

The Home Depot is a home and garden supply store that caters to all types of builders and DIY-ers — whether you’re a construction worker building a gazebo or a homemaker experimenting with gardening.

In other words, their content must cater to various demographics.

Home Depot is all about DIY, so its marketing focuses on what its supplies can help you do.

This “How to Plant a Wildflower Garden with Seeds” guide teaches consumers to grow their own wildflower garden using seeds, common flower types to plant, and what supplies they need. It even outlines the difficulty level and estimated time to complete the project.

Why This Works

As one of the most trusted brands by consumers, Home Depot knows its customers rely on the store to supply them with DIY tools and navigate these hands-on projects — with a little encouragement along the way.

This quick guide delivers on these needs and inspires customers to take action.

Extra

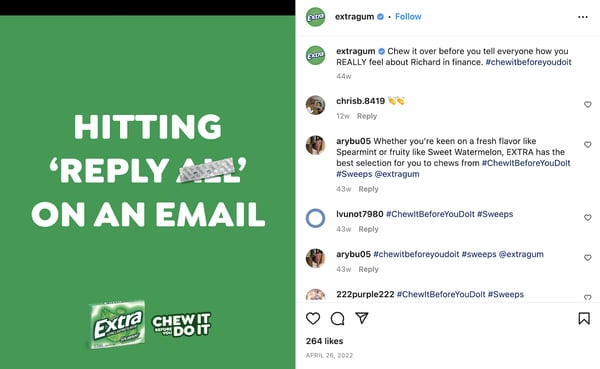

We’ve seen just about every twist on gum marketing: sexy encounters, romantic trysts, and more. Extra is pushing past that narrative.

The brand realizes that gum is a seemingly mundane product, but its omnipresence means it’s there for many of life’s little moments.

Hence, the #ChewItBeforeYouDoIt campaign is all about taking a moment to chew a piece of gum before doing, saying, or acting during your daily life. Extra suggests that doing so can be the difference between a good moment and an awkward experience.

Why This Works

In many ways, gum is a product meant to enhance intimacy, making your breath fresh for more closeness. In our techno-connected world, those everyday moments of intimacy are often overlooked.

This campaign relates to regular moments we’ve all experienced and points out how something as simple as chewing gum can make a difference in your day.

Microsoft

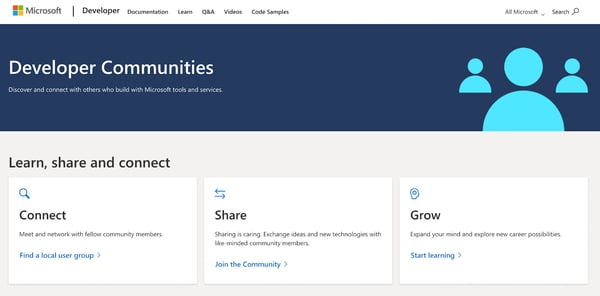

Microsoft offers a range of products from Azure to Microsoft 365. Many of these products are generally used by developers to build their own platforms or tools. To make sure these developers are supported, Microsoft created communities.

These communities help developers connect and learn from one another and are organized into different product categories, such as Microsoft 365 or gaming. People can tailor their experience based on what topics they’re interested in.

Why This Works

Developers are always seeking tips and tricks for using their go-to tools, and while there are many digital channels from which to learn, going straight to the source is always a great option.

Through interactive communities, Microsoft ensures developers can get the support and training they need to use its tools and even connect with others.

Michael’s

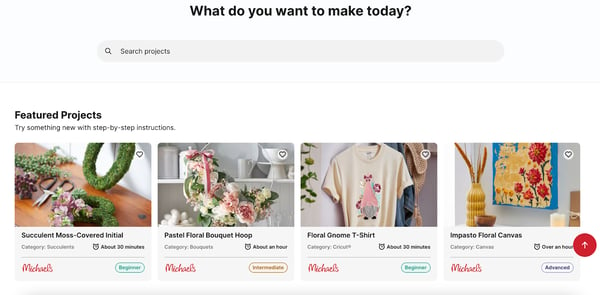

In a world where Pinterest dominates, Michael’s chain of craft stores is making a play to capture its own audience on its own properties. The brand provides craft tutorials and product features on a projects page on its website.

These projects offer step-by-step instructions on creating various crafts for beginners and advanced crafters alike.

Each project on the site also includes links to materials you may need that can be found in Michael’s online store. If you want more help with your craft, Michael’s even offers virtual and in-store classes for select projects.

Why This Works

Crafting is an exciting hobby, but not without its own frustrations. Providing useful tips and hacks on how to do things better via a free publication helps readers do more of what they love with fewer headaches.

Additionally, fans get to share their enthusiasm through social by using the hashtag #MakeItWithMichaels, helping Michael’s extend its reach to a bigger crafting audience.

JetBlue

JetBlue is a brand known for superb customer service and humor. At this point, we know where it flies and we know its hook, so its marketing needs to extend beyond the services provided.

As such, JetBlue’s content focuses more on the world of flying and the experiences we all have.

JetBlue is a brand known for superb customer service and humor. At this point, we know where it flies and its hook, so its marketing needs to extend beyond the services provided.

As such, JetBlue’s content focuses more on the world of flying and the experiences we all have.

JetBlue addresses every type of customer who may fly on its planes, from families to pets to children. That’s one reason the airline launched JetBlue Jr., an educational video series for kids ages 7–10.

The videos go over all types of aviation topics, from vocabulary to physics, in an entertaining and digestible way for kids to learn.

Why This Works

If you’re a parent, you know how much of an undertaking it can be to fly with children.

Brand marketing isn’t often tailored to children, so it’s refreshing to see JetBlue consider all passengers and empathize with a parent’s desire to keep their kids entertained while traveling.

Girlfriend Collective

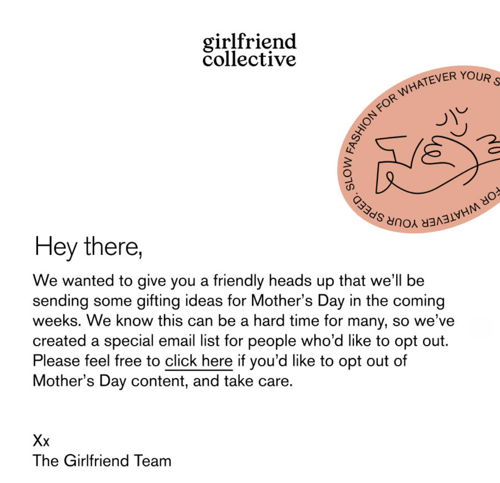

Girlfriend Collective is a sustainable clothing brand. While it has a devoted following, it’s always searching for ways to more deeply connect with its audience. The company’s email marketing channel is a fantastic outlet for that.

Girlfriend Collective uses email to share new products or upcoming launches. The brand also generally uses a targeted approach to help customers make purchasing decisions, sending more personalized emails.

One email from the brand was more personal than most and showed deep empathy and understanding for its audience.

Before Mother’s Day, Girlfriend Collective sent this email to customers, allowing them to opt out of receiving Mother’s Day promos.

Why This Works

Holidays like Mother’s Day or Father’s Day can be emotional for many people for various reasons. Girlfriend Collective gave its audience a choice to opt out of seeing these potentially triggering emails, which not many brands take the opportunity to do.

This move demonstrates that Girlfriend Collective cares about its customers and sees them as humans.

Ready to Try It?

Approach the content you seek to create from a perspective that puts others’ wants, needs, and dreams before your own. That’s the smartest way to grow an audience.

In doing so, you’re showing people that you care about them as humans, first and foremost. People want to work with (B2B) or support (B2C) people that they like and companies that they believe “get” them.

You can always talk about your brand and what you’re peddling once a connection and a relationship are established. But if you do things right, people will be drawn to you, and you won’t ever have to toot your own horn.

![]()

AI Marketing Automation: What Marketers Need to Know

Artificial Intelligence continues solidifying itself as a crucial tool within the marketing industry, especially regarding automation.

In fact, the market for artificial intelligence in marketing is expected to grow to more than $107.5 billion by 2028 — a huge leap from $15.84 billion in 2021.

So, marketers must stay current on the many ways AI marketing automation can and should be used to remain competitive. To keep you in the loop, here’s a breakdown of AI’s role in marketing automation and how marketers can leverage it.

How is AI driving marketing automation?

Why Marketers Should Use AI in Marketing Automation

Ways Use AI in Marketing Automation

Successfully implementing AI Marketing Automation

How is AI driving marketing automation?

At its core, AI uses machine learning to mimic how humans learn and improve accuracy by analyzing large stacks of data. When applied to marketing automation, AI analyzes vast data sets to pinpoint patterns, predict customer behavior, and make immediate decisions.

As a result, AI and machine learning algorithms are helping marketers automate and optimize tasks that would otherwise be tedious, time-consuming, and expensive. So, it’s no surprise that AI marketing automation is here to stay.

In 2020, the global market for marketing automation was $4,438.7 million, and it’s expected to grow to $14,180.6 million by 2030. Moreover, the top 28% of businesses actively use marketing automation and AI tools in their process.

Why Marketers Should Use AI in Marketing Automation

AI-powered marketing automation can streamline marketing processes. This gives marketers the time and space to focus on other aspects of their job — such as brainstorming and strategizing.

AI marketing automation also makes sending personalized content to customers easier, thanks to data and algorithms. Other benefits include cost efficiency and optimization of ROI.

Automating repetitive tasks can save money. For example, using AI chatbots to communicate with customers would eliminate the need for human customer service agents, which can save costs over time.

And companies that implement AI in marketing see an average increase in ROI of up to 30%, according to a study by Accenture.

Ways Use AI in Marketing Automation

Below are some ways marketers can leverage AI to automate their processes.

Personalization

McKinsey’s Next in Personalization Report shows 71% of consumers expect companies to provide personalized interactions. Furthermore, 76% of consumers experience frustration when they don’t receive personalized interactions.

Creating personalized experiences for all of your customers can be tedious, time-consuming, and unrealistic without automation.

AI can automate the process by analyzing customer data and behaviors and using that information to tailor each customer’s experience.

For example, Whole Foods leverages AI to provide customers with personalized messaging.

In 2021, Whole Foods opened several Just Walk Out stores across the U.S. The stores allow customers to pick up their items and leave without stopping at a register.

Instead, the items are charged via AI. The purchase information gathered by the AI is then used to identify patterns and predict future behaviors. This allows the AI to send personalized messages to customers.

So, if a customer purchases frozen vegan dinners, Whole Foods could send promo codes and discounts for other vegan products.

Email Automation

Marketers can send tons of emails to potential leads, but it can take significant time away from more big-picture duties. Your company can quickly send thousands of personalized emails using AI for marketing email automation.

This is especially helpful as your email list grows because who has time to send 200,000 emails multiple times a week?

Furthermore, AI can analyze the performance of your emails in real time, and you can use the data to improve your next set of emails.

Lead Scoring and Nurturing

AI can quickly and efficiently analyze data to determine which leads will likely become customers. With AI, marketers can save time and money on lead scoring while improving their leads’ quality.

AI can also automate the lead nurturing process by effectively guiding leads through the sales funnel until they are ready to purchase, boosting conversion rates.

Predictive Analytics

Part of being a successful marketer is being proactive and anticipating trends. Fortunately, AI is an excellent tool for analyzing and predicting customer behavior and trends thanks to algorithms.

This will allow marketers to adjust their marketing strategies according to predictive data. For example, the data can help a business predict the best time to launch a new product.

Channel Optimization

There are many channels to consider when marketing your brand, product, or service. With Al algorithms, marketers can easily identify which channels are the most effective in reaching their target audience.

This allows marketing to properly allocate time and funds to channels with the best return on investment.

Customer Service and Communication

62% of consumers would prefer to use a customer service bot rather than wait 15 minutes for human agents to speak with them.

Using AI to respond to customers instantly will improve your customer’s experience and satisfaction while saving time and resources.

AI-powered chatbots can answer frequently asked questions, recommend products, and process orders faster than a team could manually.

Successfully implementing AI Marketing Automation

To leverage AI marketing automation, you must identify the best tools and platforms to help you reach your marketing goals. From chatbots to software to AI-powered platforms, there are many applications to choose from.

For example, HubSpot’s content assistant is a suite of free, AI-powered features that uses generative AI to help create and share materials such as written content, outlines, and emails.

We also offer ChatSpot, a conversational CRM bot that marketing professionals can connect to HubSpot to maximize productivity.

The feature uses chat-based commands to interact with your CRM data, so you can accomplish everything you already do in HubSpot faster.

You don’t have to be super tech-savvy to implement AI marketing automation into your business.

All you need is to identify repetitive tasks within your process that could be improved by automation, then find the right tools or software to suit your needs.

Now that you know what AI marketing automation is, you’re ready to find ways to use it.

![]()

16 Great Examples of Welcome Emails for New Customers [Templates]

We’ve all heard this maxim, “First impressions last,” so we are aware how important it is to strike a good impression.

Showed up late for a job interview? That’s a bad first impression. Eat a clove of garlic and forget to brush your teeth before a first date? Also a bad first impression.

It turns out that the “make a good first impression” principle holds true not only in face-to-face encounters but in email interactions as well. The outcome of giving a good impression in emails goes a long way to connect with potential business contacts or customer

When you send a welcome email to a new blog reader, newsletter subscriber, or customer, you’re making a first impression on behalf of your brand. To help ensure you’re making the best first impression possible, we’ve rounded up some examples of standout welcome emails from brands big and small.

Pro Tip: Use HubSpot’s free email marketing software to easily create a high-quality welcome email sequence like the ones featured below.

Each example below showcases different tactics and strategies for engaging new email subscribers. Let’s dive in.

The Components of an Impressive Welcome Email

One factor that really impacts the customer onboarding process is the welcome email. While there’s no one-size-fits-all format, there are several key components that can help your email stand out from the crowd and connect with your intended audience. These include:

1. Compelling Subject Lines

Making sure recipients actually open your emails is the first step in making a good impression. Subject lines are critical, so opt for short and straight to the point subjects that state clearly what you’re sending, who it’s from, and why it matters to potential customers.

2. Content Recommendations

While the main purpose of welcome emails is to introduce your brand, it’s also critical to add value by providing the next steps for interested customers. A good place to start is by offering links to the great content on your website that will give your customers more context if they’re curious about what you do and how you do it.

3. Custom Offers

Personalization can help your welcome emails stand out from the pack. Customized introductory offers on products are something consumers often want. If you base these offers on the information they’ve provided or data available to the public through social platforms, welcome emails can help drive ongoing interest.

4. Clear Opt-Out Options

It’s also important to offer a clear way out if users aren’t interested. Make sure all your welcome emails contain “unsubscribe” options that allow customers to select how much (or how little) contact they want from you going forward. If there’s one thing that sours a budding business relationship, it’s the incessant emails that aren’t easy to stop. Always give customers a way to opt out.

Examples of Standout Welcome Emails

So what does a great welcome email look like? We’ve collected some standout welcome message series examples that include confirmation messages, thank you emails, and offer templates to help you with your customer onboarding process from start to finish — and make a great impression along the way.

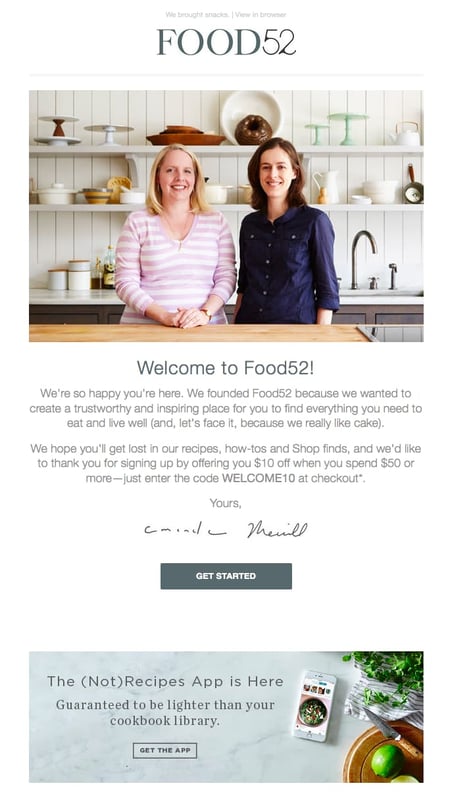

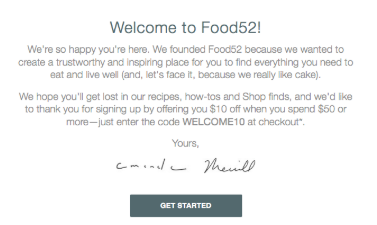

1. Food52

Type of Welcome: Confirmation

Sometimes the tiniest of elements in a welcome email can speak volumes about a brand. And when it comes to Food52’s welcome email, the preview text at the top of the email, “We brought snacks,” definitely accomplishes this.

Also known as a pre-header or snippet text, the preview text is the copy that gets pulled in from the body of an email and displayed next to (or beneath) the subject line in someone’s inbox. So when you see Food52’s welcome email in your inbox, you get a taste of their brand’s personality before you even open it.

Food52’s welcome email also does a good job of building trust by putting a face (make that two faces) to their name. As soon as you open the email, you see a photograph and message from the company’s founders.

2. Monday.com

Type of Welcome: Video

From the subject line, down to the conversational tone in the email body, the image of a welcome email above keeps it friendly and simple, so the focus stays on the introductory video inside.

Monday.com is a task management tool for teams and businesses, and the welcome email you get when you sign up makes you feel like a CEO, because Roy Man is speaking directly to you. The email even personalizes the opening greeting by using the recipient’s first name, and this is well known for increasing email click-through rates (especially if the name is in the subject line).

The more you can make your email sound like a one-on-one conversation between you and your subscriber, the better. If you have just so many details that you need to inform your new customer about, follow Monday.com’s lead and embed them on a video, rather than spelling them all out on the email itself.

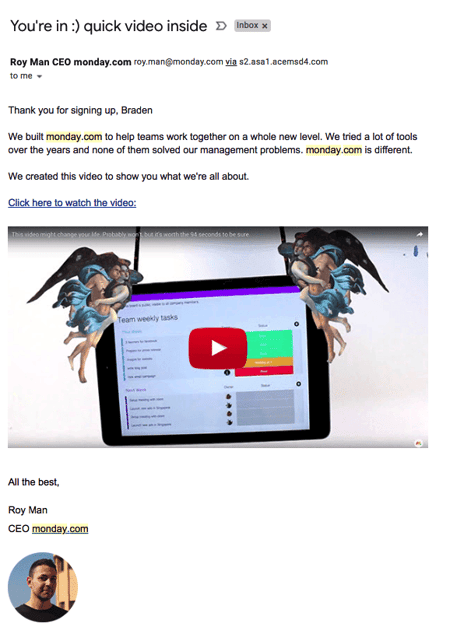

3. Kate Spade

Type of Welcome: Thank You

Let’s face it, the internet-using public is constantly bombarded with prompts to sign up for and subscribe to all sorts of email communications. So as a brand, when someone takes the time to sift through all the chaos to intentionally sign up for your email communications, it’s a big deal.

To acknowledge how grateful they are to the folks who actually take their time to subscribe, Kate Spade uses a simple but effective tactic with their welcome emails. They say “Thank You” in big, bold lettering. By placing that “Thank You” note on an envelope, Kate Spade recreates the feeling of receiving an actual thank you letter via mail. (The 15% off discount code doesn’t hurt either.)

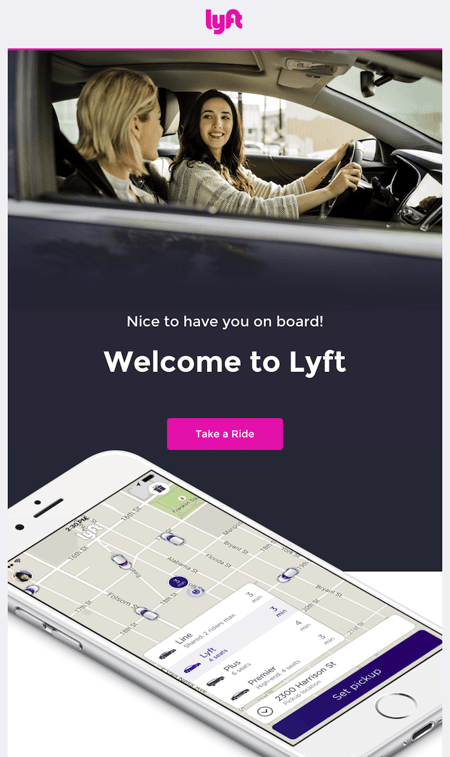

4. Lyft

Type of Welcome: New Customers

If there’s an ideal “attitude” that welcome emails should give off, Lyft has it. The company’s simple but vibrant welcome email focuses entirely on the look and feel of the app, delivering a design that’s as warm and smooth as the lifts that Lyft wants to give you.

At the same time, the email’s branded pink call-to-action draws your eyes toward the center of the page to “Take a Ride”, an inviting language that doesn’t make you feel pressured as a new user.

5. Munk Pank

Type of Welcome: About Us

The Munk Pank’s welcome email is the story of why the company was founded. This is a healthy snack store founded by a husband and wife. In their welcome email, they mention that they started the company because they never seemed to find nutritious snacks to keep them energized and on the go.

This is an excellent version of a welcome email because they let their customers know they can relate to the problems they’re facing and they’ve been there. This helps in building trust and relatability; it also gives customers a peek into what they should expect from their products.

The email ends by sharing the company’s mission to help them live a healthy lifestyle. This welcome email lets subscribers know that they’re joining a tribe that is concerned about their healthy eating and lifestyle; a mission that goes beyond snacks.

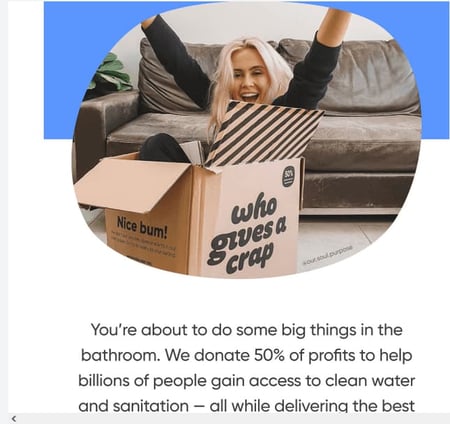

6. Who Gives a Crap

Type of Welcome: Product Story

Who Gives a Crap is an organization that sells organic toilet paper, and they’re passionate about it. Their welcome email is equally fun and informative. They state all the reasons why you should opt for organic and eco-friendly products. Then, they sweeten the pot (pun intended) by noting that they donate 50% of their profits to global sanitation projects.

The email reminds the buyer that they still get the toilet paper at the same price they do in the supermarket. They also have a compelling call to action in their welcome email that offers 10% off of their products for people who subscribe to their email list. The company added its “Shop Now” button for convenience, so if readers are convinced to buy, they can do so in one click.

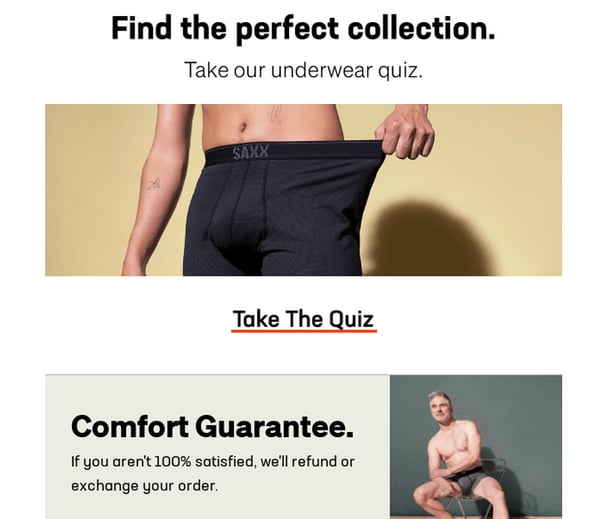

7. SAXX Underwear

Type of Welcome: Free Gift or Offer

SAXX Underwear specializes in men’s underwear, and their welcome email is very catchy and creative. Their subject line “Welcome to you and your balls” is just a taste of how they use a humorous and relatable tone to connect with their audience.

Their welcome email is visual, too. They demonstrate their comfort guarantee with images of models wearing their boxers.

The welcome email also gives a 10% off code for first-time buyers and directs them to their store. Besides the offer, they present their refund policy boldly to offer reassurance for prospects who may be unsure. These gestures help to build trust with their new subscribers and encourage them to buy from them.

What really stands out in the SAXX Underwear welcome email is the tone of the copy and the careful yet bold and catchy choice of words.

8. InVision

Type of Welcome: Free Trial

When you sign up for InVision’s free prototyping app, the welcome email makes it very clear what your next step should be.

To guide people on how to use InVision’s app, the company’s welcome email doesn’t simply list out what you need to do to get started. Instead, it shows you what you need to do with a series of quick videos. Given the visual, interactive nature of the product, this makes a lot of sense.

9. Drift

Type of Welcome: Confirmation

No fancy design work. No videos. No photos. The welcome email Drift sends out after signing up for their newsletter is a lesson in minimalism.

The email opens with a bit of candid commentary on the email itself. “Most people have really long welcome email sequences after you get on their email list,” Dave from Drift writes, before continuing: “Good news: we aren’t most people.” What follows is simply a bulleted list of the company’s most popular blog posts. And the only mention of the product comes in a brief postscript at the very end.

If you’re trying to craft a welcome email that’s non-interruptive, and laser-focused on adding value instead of fluff, this is a great example to follow.

10. Inbound

Type of Welcome: Event Signup

Inbound attracts business professionals from all over the world. So, it’s fitting that its event confirmation email is simple and easy to follow, with useful links for event information, help, and accessibility.

Keep scrolling and you’ll see even more useful additions, like:

- Links to add the event to your calendar

- Social media sharing buttons

- Directions through Google Maps

This all-in-one approach to event welcomes makes sure that even if people who wish to attend only see one email, that email will include everything they need.

11. Creative Capital

Type of Welcome: New Donor

Nonprofit marketing can be a challenge, but this email sheds light on endless possibilities. In this welcome email, donors to Creative Capital get a healthy dose of inspiration.

The email begins with a striking GIF that combines the work of supported artists with bright thank you messages. It continues with a poetic message about the types of artists the org supports. This is a chance to inspire every donor. It reminds them who their donation is supporting and why that action has massive value.

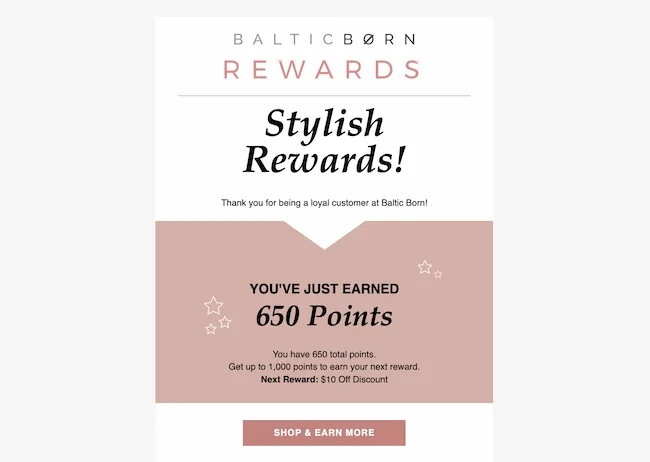

12. Baltic Born

Type of Welcome: Customer Loyalty

Frequent shoppers can end up in more loyalty programs than they can count, so it’s important for these welcome emails to stand out and show off a big offer.

From the start, this email focuses on concrete rewards. Then, it gives a clear explanation of Baltic Born’s reward system. It continues with a button that compels the recipient to get more points.

And the monochromatic design is attractive, but not distracting or overwhelming, making it easy to read on mobile devices.

13. PepTalkHer

Type of Welcome: Confirmation

While many subscribers click submit to solve a problem, positivity is key in a welcome email. This org supports women on their path to wage equality. It could be tempting for this email to start with emotionally-charged language or statistics that show how big a problem the gender pay gap is.

Instead, PepTalkHer shows its understanding of its target audience. This email centers on the support, value, and overall awesomeness of this community. It also adds useful links to social media and website channels. This helps jump start each signup’s journey.

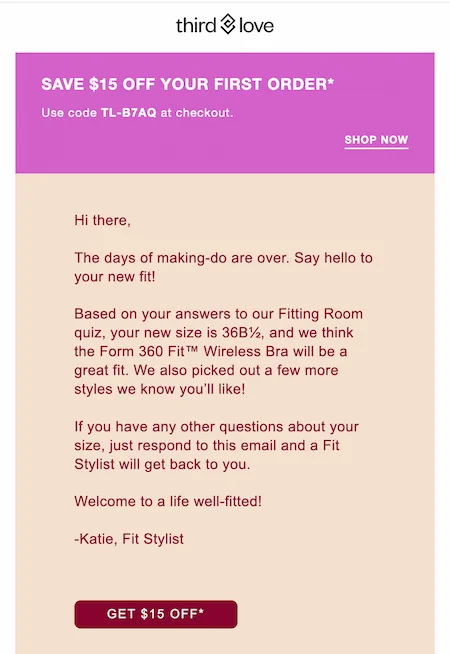

14. Third Love

Type of Welcome: Discount Code

As generative AI runs to the forefront of email marketing strategy, personalization is more important than ever before.

This email grabs subscribers with a personalized offer. The customer experience begins with a well-designed online quiz. Then, the results of that quiz are woven into a useful and personal email that includes size and product recommendations, along with a discount offer.

The writing style of this email is personal too, with a signoff that sounds both supportive and genuine.

15. Swipe Files

Type of Welcome: New Customers

There’s nothing quite like a personal welcome email to make an impression on new subscribers. It’s said that good writing is good thinking, and this welcome email is a great example of that idea. This message reads authentic, kind, and curious. It uses direct language, easy-to-read paragraphs, and simple calls-to-action. This shows every subscriber what they’re getting into with their subscription and leaves them excited for more.

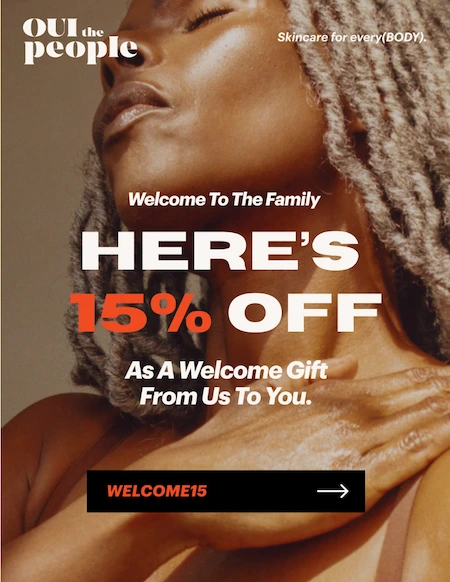

16. Oui the People

Type of Welcome: Discount Code

Powerful graphics are another way to make a strong first impression. After signing up for skincare brand Oui the People’s mailing list, the welcome email that hits your inbox makes a gorgeous visual statement that shows the brand’s vision and personality. Then, it uses bold type to make a compelling offer.

The copy that follows not only matches but amplifies the vibe of the opening image. “Together, we’re going against the grain of traditional beauty to create (damned good) products that feel like they were designed just for you and all of your glorious complexity. Life-changing, not you-changing.” The one-two punch of graphics, CTA, and copy makes it tough not to engage with this welcome.

Welcome Email Templates

Need a little help in getting your welcome email efforts off the ground? We’ve got you covered with free welcome message templates to streamline the connection process.

Each template shows a different way you can welcome your customers. These examples make it simple to send a welcome email to meet your customer’s needs at their current spot in the customer journey.

About Us

An About Us welcome email introduces new subscribers to your company with a firsthand story. It gives you a chance to share who you are, what you do, and what you stand for. This helps you develop a relationship with your subscriber, which can help them feel more invested in your brand.

It’s also a chance to set expectations about the content or benefits you offer to your subscribers.

Hey [First name],

Welcome to [Brand name]. We’re thrilled to have you join us on our mission to [insert company mission or vision].

We started [Brand name] to solve [insert the problem your product or service solves] because [creation story for your founder(s)]. We want to inspire people to [insert big-picture product impact].

We are constantly refining our product to live up to our vision.

We believe that [our product] will make a difference for you too, and we can’t wait to hear your story. Please feel free to reply to this email and tell us about you and what you hope to achieve.

Thank you for joining us on this journey. We look forward to hearing your story.

Looking forward to hearing more,

[Signature]

Product Story

Product story emails showcase your product or service and give you a chance to educate and inspire with your welcome. A product story welcome email doesn’t just have to be about how you created your product. It can tell stories about:

- The problem your product or service solves

- Product benefits

- The materials you use to make your product

- Key product features

This welcome email can help you expand brand awareness as well as improve customer engagement and conversions.

Hi [First name],

Thank you for choosing [product or service]. We’re delighted to share [product].

At [Brand name], we understand that [problem that the product solves] is a challenge. That’s why we [share how your product is made, the materials you use, or features]. With our product, you’ll get [insert the benefits of the product and how it can solve the problem the customer is facing].

Thank you for joining the [Brand name] community. We’re here to help. And if you have any questions or feedback, contact X at [email, social media, or phone number].

p.s. [add a short personalized note for postscript].

With gratitude,

[Signature]

Video

Video welcomes are a quick and powerful way to connect with new customers, subscribers, or employees. You can feature the people, culture, or messaging that represent your brand in your video. Videos are also a great way to share:

- Product features and benefits

- Tutorials

- Promotions

Video welcome emails can help your business stand out from companies sending text-only email communication. They’re also a quick way to grab attention as you begin your relationship with a new contact.

Welcome to [Brand name], [first name of your subscriber].

We’re excited to share this video message [insert link to the video]. It will tell you a little bit about [content of your video].

Watching this quick video is just the first step toward reaching your goal of [desired outcomes for contact]. Our team is always here to offer you the guidance and resources you need.

Thank you for being a part of the [Brand name] community.

[Signature]

Free Gift or Offer

Free gifts and welcome offers give new subscribers and customers a tempting reason to re-engage with your brand. They’re useful for creating urgency. These welcome emails are also a great way to highlight personalized offers for the latest addition to your email list.

A free offer or exclusive gift can improve customer retention and loyalty, as well as build anticipation for future offers.

Hey [First name] —

Welcome to [Brand name]!

As the latest (and greatest) addition to our community, we’d like to give you a free [insert gift item]. It’s our way of saying thank you for choosing us for your [product type] needs.

To claim your [offer], just add the promo code FREEGIFT at checkout and your gift will be on the way to you soon.

If you have any questions or feedback until then, please get in touch at [contact information]. We’re always here to help.

All best,

[Signature or Brand name]

Event Signup

An event signup welcome email is key to the event registration process. This one piece of communication:

- Confirms successful event registration

- Offers important event logistics

- Highlights speakers and other event details

- Prepares attendees for the event

This type of welcome email is also a first step to connecting with a customer. It builds trust and shows how they can benefit from further engagement.

Hi [Attendee name],

Thank you for registering for [Event name]. We can’t wait for you to join us during this important event.

This email includes your registration confirmation, event location, date, and more.

- [Registration Confirmation Details]

- [Attendee name]

- [Attendee email]

- [Registration type (such as VIP, General Admission, etc.)]

- [Number of tickets]

- [Confirmation code]

- [Event name]

- [Event location]

- [Date and time]

This session will include [featured panels, speakers, sessions]. We’ve also organized [meeting rooms, mixers] for networking opportunities and connecting with your peers. You’ll also have the chance to see [special events, attendee-only exclusives].

Note: You’ll need your confirmation code or badge to enter the event, and we’ve attached a PDF with other helpful tips.

If you have any questions about your registration, contact [Event organizer] or respond to this email.

Thank you again for registering for [Event name]. We can’t wait to see you there!

Best regards,

[Signature]

Confirmation

Confirmation emails can sometimes feel cold or impersonal, so this is another email where it’s vital to add some welcome. A confirmation email assures your subscriber or buyer that they’ve successfully completed signup. It’s also a chance to share useful information to make them feel more comfortable about what comes next.

For example, you might want to add order details, shipping, or the day of the week your newsletter comes out. Personalizing this welcome email can go a long way to building trust with your subscribers.

Hi [First name],

Thank you for your [subscription] to [Newsletter or Brand name]!

There’s just one more step to complete the process and join [Brand name’s] community of [term that describes your customers, such as business owners, rock stars, nature, lovers]. Click the link below to confirm your subscription.

With that one click, you’ll be the first to know the latest updates, products, and resources from us. You’ll also have access to quality content and support.

Thank you again for subscribing. We can’t wait to share and learn with you.

[Signature]

Free Trial

Your welcome email for a free trial is important because it sets the tone for your relationship with each customer. It’s a chance to say thank you, offer extra help, and set expectations for your product.

This first email is also a chance to show users how to make the most of your product and point out features and benefits they might miss on their own.

This welcome email has a specific goal — to turn that free trial into a paying customer. With that in mind, it’s important to strike a balance. This email should point out tips, features, and details, but not overwhelm with too much information.

Hi [First name]!

Thank you for signing up for your free trial of [product or Company name]. We can’t wait for you to try out our [product].

With your free trial, you’ll have access to [popular features] so you can test what works for you. To make the most of your free trial, [outline first step], then [list two or three potential use cases].

If you’re looking for support or instructions, check out [links to support, help, and social media resources]. You can also take a quick look at the product video below for a quick walk-through.

We’ll be in touch with next steps for your trial soon. Until then, thank you again for choosing [product or brand name]!

Hoping this is helpful,

[Signature]

Thank You

Thank You welcome emails lead with gratitude to your subscribers and customers. Whether they’re signing up for your newsletter, RSVPing for an event, or making a purchase, this welcome email leads with the positive.

Hi [First name],

Thank you for choosing [Brand name]. We’re so happy you decided to [join, subscribe, complete a purchase].

Giving you a great experience is our top priority — and on that note, we want to make sure you know that our [Customer loyalty team, customer support team, social media community] is here with news, offers, and more just for you.

Again, thank you for choosing [Brand name]. We look forward to offering you quality products and winning service for many years to come.

All best,

[Signature]

Welcome Email Template for New Customers

Your new customer welcome email often marks the beginning of the customer relationship. This email usually contains a lot of information. It might include order confirmation, product information, helpful tips, or a review request.

At the same time, it needs to set a tone that emphasizes the character and value of your brand and products. So, it needs to be welcoming, engaging, and encouraging.

Hi [Customer],

This is really exciting: Welcome (officially) to [your product or service here]. We’re so lucky to have you.

[I/we] are here to help make sure you get the results you expect from [your product or service here], so don’t hesitate to reach out with questions. [I’d/we’d] love to hear from you.

To help you get started, [I/we] recommend checking out these resources:

- [Resource 1]

- [Resource 2]

- [Resource 3]

If you need support, you can reply to this email or give us a call at [555-555-5555]. [I/we] can talk you through the details and information you need to get started.

Looking forward to hearing from you,

[Your company/name]

Discount Code

Discount codes make great welcome emails. This is because they lead with something your subscriber wants. It encourages a purchase, but this email is also a chance to show appreciation, develop brand awareness, or boost new products.

To make the most of this type of welcome email, think about limited-time or occasion-specific offers. This adds urgency and gives you a chance to quickly boost your customer relationship.

[First name],

You don’t have to wait to experience [popular products]. As a welcome to our community, we’re offering you a special discount.

To use your discount, just enter the code WELCOME10 when you check out. You can use this code to purchase [specific products or special promotion].

One more thing: Be sure to take advantage of this offer before [expiration date].

If you need any help or guidance using your discount code, just get in touch with [support team information.]

Thank you!

[Signature]

Customer Loyalty

Some customers will get more than one welcome email from you, so it’s important to make your welcome email specific. One example — your customer loyalty program. When someone signs up to be an affiliate or joins an incentive program for your brand, they need a different kind of welcome.

As you draft this email, focus on personalized connection. Whether you’re offering thanks for their support, sharing sneak peeks, or giving exclusive offers, each customer needs to feel special.

Use surveys, interactive features, and integrations to collect feedback from current customers. Then, once your subscribers become loyal customers, you can use these tools to make your loyalty welcome email super personal.

Hey [First name],

Welcome to [Brand loyalty program]! You’ve joined an exclusive group of customers who make our brand and products better, and we are so excited you’re here.

Customer loyalty at [Brand name] means [outline top loyalty program benefits]. It’s a personal thank you for choosing our products.

Your membership also includes these perks:

[Benefit 1]

[Benefit 2]

[Benefit 3]

To make the most of your benefits, [share first steps to activate membership].

We also want to hear from you! Contact us with any questions or feedback — our team is always here to help.

Your first purchase, [name of first product purchase], set you on the path to becoming one of our most loyal customers. We can’t wait to see what you’ll do as part of our [Loyalty program] community.

Kind regards,

[Signature]

New Donor

Each new donor has a major impact on your business’s future. So, the way that you welcome each donor is a key part of their experience.

This welcome email is a chance to offer thanks, review your company’s mission and vision, or ask for continued or deeper engagement. The donor welcome email is also a time to:

- Share inspirational stories

- Highlight the problems your organization is working to solve

- Offer recent data on the status of your work

Dear [Donor name],

I’m writing to personally welcome you to [Nonprofit Organization name]. Thank you again for your generous donation.

Your contribution is making an immediate impact on our work to [revisit your mission and/or vision].

With your support, our team will continue to [outline important services and impact]. With continued work together, we can make a lasting difference.

We will stay in touch with updates and events at [Nonprofit Organization name]. We’ll also share critical updates on how your contribution is improving [share recent data and statistics toward critical goals].

Thank you again for your donation, and for choosing to be a part of [Nonprofit Organization name]’s vision.

Best regards,

[Signature]

Now that you’ve seen some great examples of welcome emails and templates, let’s dig into the process of writing a great email and catching customer attention.

1. Write a catchy subject line.

Research shows that while more than 90% of welcome emails are opened, just 23% of them are actually read. That means if your welcome email doesn’t catch the eye of your new customer, they may not know you sent it at all.

The best tool you can leverage to increase email open rates is the subject line. A catchy and actionable subject line can draw customers in and make them curious about your content.

When writing subject lines, be sure to include what your email is promoting and how it will benefit your customer. Remember to be concise, because the reader can only see a sentence or two in the preview. A good rule of thumb is that your subject line should give enough information to pique the reader’s interest, but not enough so that they need to open your email for the full details.

2. Restate your value proposition.

Although this may seem like an unnecessary step to take, it can actually offer some significant benefits.

The most obvious benefit is that it gives the customer some reassurance that they made the right decision signing up. It’s never a bad thing to remind customers why they created an account with you, and it clarifies exactly what they can expect to achieve with your product or service.

This also gives you the opportunity to clearly explain any ancillary services or features that you offer that could create more stickiness with your business. This is especially true if you have a complex solution with unique features that customers might not know about.

3. Show the next onboarding steps.

Now that you’ve reminded them why they signed up, get them fully set up with your product or service. Usually, there are steps that users must take after signing up to get the most out of the platform. Examples include:

- Completing their profile information

- Setting preferences

- Uploading necessary information (such as contacts into a CRM, profile picture for a social media profile, etc.)

- Upgrading their account or completing an order

4. Generate the “A-ha” moment.

This is one of the most important steps to take in a welcome email, and there’s a substantial and data-driven reason behind that. Former Facebook head of growth, Chamath Palihapitiya, discovered that if you can get a user to acquire seven friends within 10 days, they were much more likely to see Facebook’s “core value” and become a returning active user. This is known as an “a-ha moment,” in which the customer understands how they benefit from using your product or service.

The goal is to get the user to this aha moment as quickly as possible so your product sticks and the customer achieves success as soon as possible. This will produce a better overall customer experience and ultimately help your business grow.

To get this done, first identify your business’s “core value” and the obstacles or prerequisites customers must complete to receive this value. Then you can use your welcome email to guide new customers through these tasks.

5. Add helpful resources.

As mentioned in the previous step, you want the user to see the value immediately. But, customer success doesn’t stop there. Depending on the nature and complexity of your product, customers may need more help. For example, customers might need guidance on troubleshooting, utilizing advanced features, or getting the most value out of your core features.

It’s likely that you’ve already created help content addressing common questions from customers. Whether it’s tutorial videos, an FAQ page, or helpful blog posts containing best practices, this help content is essential to customer success. Why not include it in your welcome email? This gives them the tools they need upfront without forcing them to search for the information after a problem arises.

6. Provide customer service contact information.

The final step to setting your customers up for success is making sure that they know how to contact you. You can spend all the time in the world creating excellent help content, but you can’t foresee every possible problem that will arise for your customers.

Even if you could, customers are only human, and not all of them will be willing to pore through your help resources to find the answer to their questions. So it’s best to be forthright with customers on how they can get in touch with you for help.

Adding this contact information to your welcome email is a great way to lay the foundation of trust needed for building a relationship. It drives customer loyalty and reassures readers that you are available if they need you. Avoid sending customers on a treasure hunt just to find a way to ask you a simple question. This will lead to frustration and send them into the arms of your competitors.

7. Conclude with a call-to-action.

You should wrap up your welcome email with a call-to-action that entices customers to begin the onboarding process. After you’ve demonstrated your company’s values and explained how you’re going to help them achieve their goals, customers will be eager to get started. So, make things easier for them by providing a button at the end of the email that triggers the first step in the onboarding process.

Here’s one example of what this could look like.

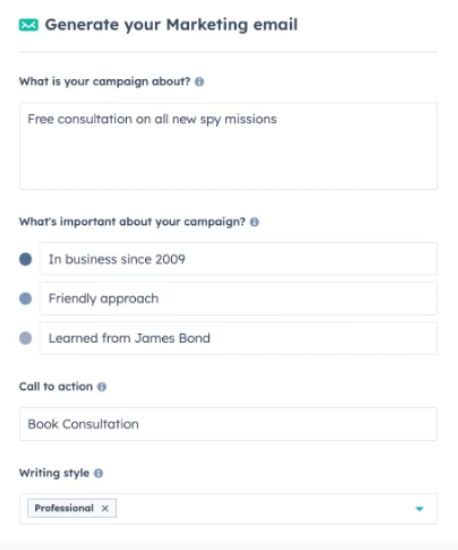

Pro tip: To scale the process, you can use the steps above to create an AI prompt that will generate a first-draft welcome email in seconds.

Just plug your value props, next steps, and CTA into a tool like HubSpot’s Campaign Assistant to get started. You can even use the same prompts to create matching ad copy or landing page content.

Get Started with HubSpot’s Campaign Assistant

How to Write an Employee Onboarding Welcome Email

Welcome emails aren’t just for customers. The onboarding process has a huge impact on how the new employees feel about your company, so it’s important to give it the time and energy it deserves.

One of the important parts of this process is the onboarding welcome email. It has to match the company’s tone and outline all the expectations you have for the new employee. If this is your first time writing an onboarding email, you’ll find the following steps helpful.

Here are the steps to follow when writing an onboarding email.

1. Decide on the content of your onboarding email.

Before you start, it’s important that you are aware of the content of an onboarding welcome email.

The contents are going to vary based on the conditions. For example, an email onboarding remote employees is completely different from an onboarding email for an employee who will work onsite.

For an onsite employee, the onboarding email should include:

- Welcome events

- First-day schedule

- Arrival instructions

- How to access their workstation

- Break room details (where to warm lunch, get coffee, etc.)

- Dress code

- What they’re required to bring (passport, ID, social security work, or any other paperwork)

- Parking information

- Contact information

For a remote employee, the content may include:

- First-day schedule

- Contact information

- Signup details for collaboration tools

- Welcome video conference meeting (time to be held)

Again, you can change the content based on your company’s needs.

2. Decide on the tone you want to use in your email.

The next thing you need to decide on is the tone you want to use in your onboarding email. Do you consider your company friendly, casual, or super formal? Whatever your answer is, it should reflect on the tone of the onboarding email. This gives the employee an idea of the kind of workplace environment they should expect. It also sets the tone for how your new employee is expected to use when representing your brand.

3. Draft your onboarding email.

The next step is to draft your onboarding email. While the tone of your email might change to fit your needs, here is an example of a template you can use.

Dear [Employee’s name],

We are very excited to welcome you to [company name]. Please remember to carry your ID to get easy access to our premises. We expect you to be in the office by [time], and our dress code is [formal/super casual].

At [company name], we pride ourselves on creating the best environment for our employees. As you’ll see, our team has already prepared your workstation for you and set up your software to make your first day easy. You’ll also be given access to your designated parking spot, a customized company bag, t-shirt, and mugs, among other goodies.

Our team has also planned all the details for your first week to ensure you settle easily. You’ll receive a document with your schedule and agendas for your first week from HR when you arrive. Human Resources will also help you fill in the required paperwork and answer all your questions. After the meeting with HR, you’ll be assigned a mentor who will show you the ropes of our company and how we get things done.

Our team is excited to meet you during the [planned event].

If you need any clarity before you arrive, please contact me by phone [phone number] or email. I’ll be more than happy to help.

Welcome to the [company name], [employee name]. We are looking forward to working with you and watching you grow and soar to greater heights!

Warm Regards,

[Signature]

4. Edit your email.

After writing your email, make sure you edit it to make sure you include all the necessary details. You can also use tools like Grammarly for any grammatical errors. You can also have a colleague double-check the email. Remember to attach any necessary documents, links, or images as supplemental information.

5. Send or schedule the email.

Lastly, send the email or schedule it so it’s received in a timely manner. For example, you want to avoid sending an onboarding welcome email on Sunday evening, which may give the wrong impression.

This will allow the new Employee to be psychologically prepared and find the necessary documents.

Make a Great First Impression

Bottom line? Whether it’s in person, over the phone, or by email, first impressions matter. Your welcome email is often the first chance a prospective customer or contact has to see what your brand is all about and if you don’t stick the landing, they’ll likely go somewhere else.

Luckily, writing a great welcome email is simple. It’s not necessarily easy, but if you focus on what matters such as compelling subject lines, great content, personalized offers, and always, always a way to opt out, your first impression can help lay the groundwork for long-term relationships.

Editor’s note: This post was originally published in April 2016 and has been updated for comprehensiveness.

![]()

12 Tips on How to Become an Influencer [+Data]

The influencer landscape is incredibly lucrative. In 2022, the influencer market was valued at $16.4 billion and is estimated to hit $21.1 billion in 2023. If you want to step into the influencer market, you’re probably wondering how to become an influencer.

In this article, we’re going to dive into what it takes to become an influencer and the steps you need to take to find success. First, let’s define an influencer.

How to Become an Influencer for a Brand

9. Network with other influencers.

10. Create a media kit and pitch yourself to brands.

How to Become an Influencer on Social Media

1. Build an online community around your content.

2. Repurpose content as necessary.

3. Always be willing to learn and be open to new platforms.

What is an influencer?

An influencer is a person with the ability to influence consumers to purchase a service or product by promoting, recommending, or using them on social media.

For example, Jackie Aina is a beauty and makeup influencer who has collaborated with and promoted brands such as e.l.f. Cosmetics, Too Faced, Milk Makeup, and more.

How to Become an Influencer for a Brand

If you want to become an influencer who works with brands, here’s what you need to do to reach your goal.

1. Find your niche.

First, figure out what you’re passionate about. Is it fashion, tech, entertainment, health, or something else? From there, carve out a niche within your passion to set yourself apart from other influencers.

For example, if you want to be a fashion influencer, you might decide your niche is thrift store fashion, DIY fashion, or stylish outfits on a budget. If you need help finding your niche, determine who your target audience is first.

To determine your target audience, consider your ideal consumer’s wants, needs, challenges, and goals. Then use that information to create a buyer persona to find the right niche to tap into your target audience or use HubSpot’s Buyer Persona Generation Tool.

2. Choose your platform.

Once you know your target audience, you must choose a platform (or platforms) to reach them. Instagram is one of the most popular platforms for influencers and brands, and it’s easy to see why.

According to our social media trends survey, 72% of marketers listed Instagram among the social media platforms on which they work with influencers and creators.

.jpg?width=600&height=314&name=Copy%20of%20Facebook%20Shared%20Link%20-%201200x628%20-%20Percentage%20+%20Copy%20-%20Dark%20(4).jpg) Furthermore, most marketers surveyed (30%) said Instagram is the platform they get the most significant ROI when working with influencers and creators. However, that doesn’t mean Instagram is the right choice for everyone — mainly if your ideal audience doesn’t spend much time on that platform.

Furthermore, most marketers surveyed (30%) said Instagram is the platform they get the most significant ROI when working with influencers and creators. However, that doesn’t mean Instagram is the right choice for everyone — mainly if your ideal audience doesn’t spend much time on that platform.

For example, if you’re an influencer whose niche has to do with video games, Twitch might be the better platform. Video game fans often tune into Twitch to watch content creators play their favorite games or to stream their playthroughs.

If your audience is mostly Gen Z, you’ll likely want to consider TikTok as your platform of choice.

You should also research other influencers in your niche to see what platforms they leverage the most. For example, style influencers are primarily on Instagram or Pinterest. Entertainment influencers may mostly be on TikTok or YouTube.

Once you know which platform your audience and fellow influencers frequent the most, you can select the right social media platform to post your content.

3. Create a content strategy.

The format and quality of your content will make or break your chances of successfully building yourself as an influencer. Decide on the format you’ll use when creating your content.

The format should be feasible on the platform you choose to leverage, and it should be a format that allows you to deliver valuable information while showcasing your unique personality.

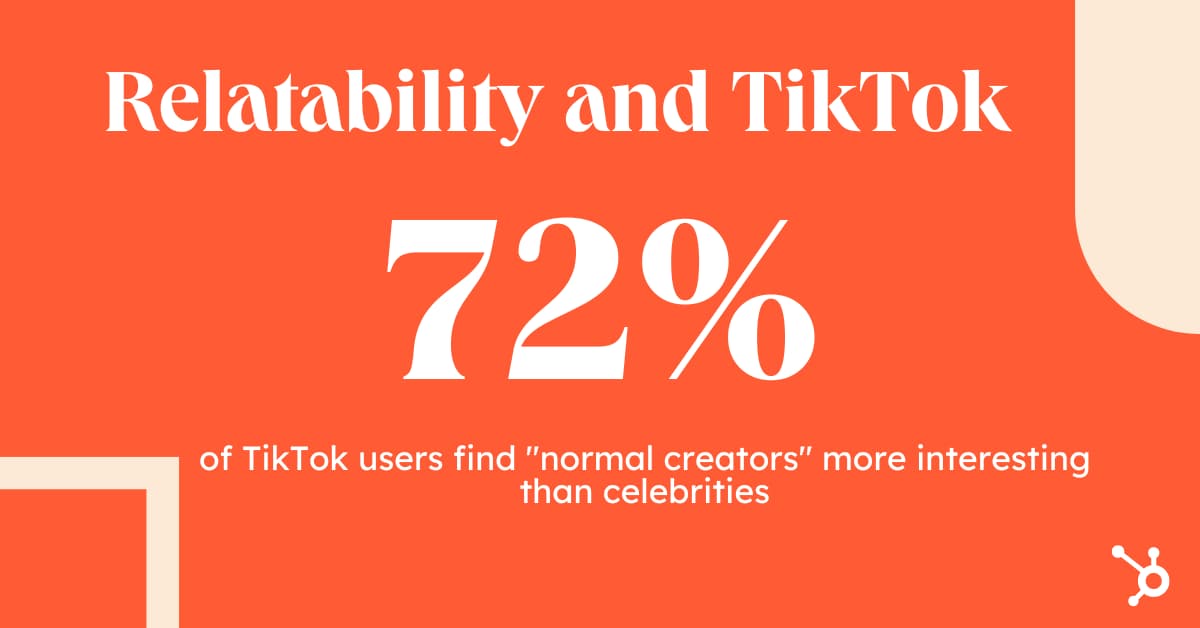

An effective content strategy will give your audience a proper balance of informative content and personal content. Remember, relatability and authenticity are the reasons people trust influencers.

In fact, 72% of TikTok users find “normal creators” more interesting than celebrities, according to the platform.

To find the perfect balance of content for your strategy, use the 5-3-2 principle. With the 5-3-2 principle, five out of every ten posts would be curated content from a source relevant to your audience.

Three posts should be content you’ve created pertinent to your audience, and two posts would be personal posts about yourself to humanize your online presence.

You’re probably wondering, “How will this help me become an influencer if half of the content I publish is curated?”

For starters, influencers are known for being able to provide valuable content to their audience. That includes sharing content written by others that they believe their followers will find helpful.

Sharing content published by other influencers in your niche will help you slowly get their attention. As a result, it will be much easier to reach out to them and ask them to do the same for you later on.

When it comes to the quality of your content, you should invest in equipment such as mics, cameras, and lighting to give your audience gorgeous content that will keep them coming back for more.

Pro Tip: Smartphones have excellent cameras these days, so you can use your phone to record your content if you’re not ready to invest in an expensive camera. Just make sure to use the front-facing camera for the best image.

4. Distribute your content.

No matter how great your content is, if you’re not getting people to see it and engage with it, it’s not exactly practical.

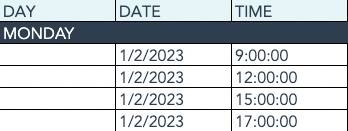

That said, it’s essential that you carefully plan out when you’ll be publishing and distributing your content on social media.

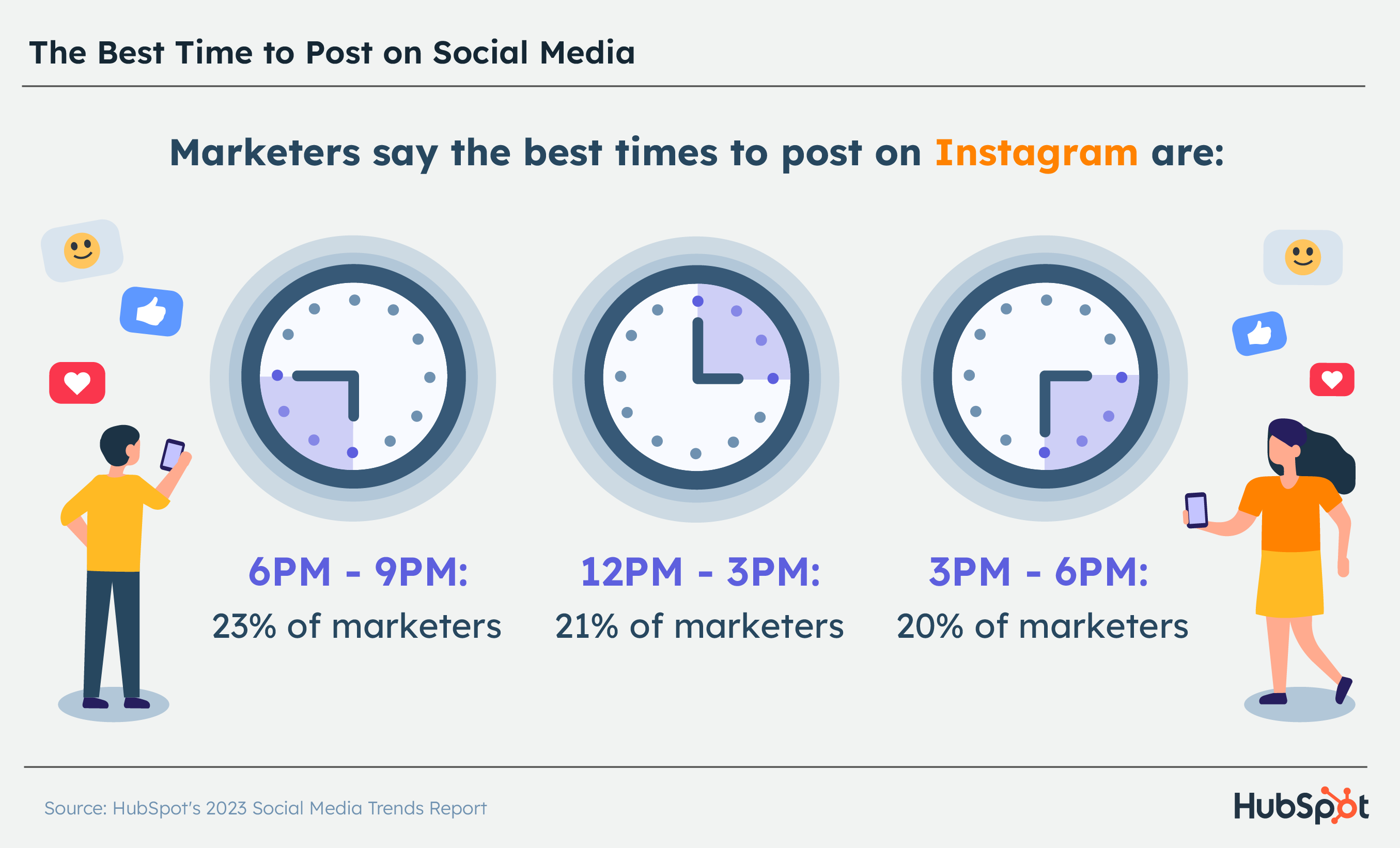

The best time to post content on social media hugely depends on which social media channel you choose. This infographic provides a detailed breakdown of the best days and times to distribute content for each popular social media network.

It’s just as critical to know how to post your content on social media. While each social media channel has its own rules and guidelines, here are some general best practices that are applicable regardless of which social media channel you use.

5. Start a website.

Whether you leverage YouTube, TikTok, Pinterest, or some other social media platform — you should always have your website as an influencer.

Websites are great for SEO because they allow you a space to create evergreen content with keywords that are optimized to get you at the top of SERPs.

You can create content around themes and keywords your audience is searching for, allowing them to flock to your website.

Furthermore, a website is an excellent avenue for consumers to engage directly with and buy products from you. It also allows brands and advertisers to learn more about you and your content and reach out to you for opportunities.

Finally, securing a long-term home base is the most important reason to have a website. Social media platforms change constantly. An app that’s popular today can lose users to tomorrow.

Even worse, a platform can completely shut down, taking all your content with it.

A website that houses your business information, content, links, and points of contact will help you stay relevant and grow as an influencer for years to come.

6. Stay updated.

As an influencer, staying tuned into the latest trends and buzzy topics is essential.

So, follow other creators in your niche on social media, keep an eye out for trending hashtags and challenges, and know what keywords your audience is searching online.

You also need to remember that social media platforms will often change their policies, algorithms, and posting terms — so stay updated to avoid your account becoming irrelevant or, worse, deleted.

Most importantly, you’ll need to familiarize yourself with Federal Trade Commission’s (FTC) guidelines and policies, especially if you’re going to be collaborating with brands to promote their products and services on your social media accounts.

7. Be yourself.

Remember, authenticity is key to being a successful influencer. Almost 70% of marketers say “authenticity and transparency” are crucial to successful influencer marketing, according to Econsultancy.

Moreover, 61% of consumers prefer influencers who create authentic, engaging content.

The best way to be authentic is to be yourself. While your content should be quality, you yourself don’t have to be flawless to be an influencer.

If your house cat walks into your shot, or you laugh as a car blasting music drives by as you’re recording — it’s okay!

Don’t be afraid to be silly on camera or show off your sense of humor — consumers love influencers because they’re more relatable and “real” than celebrities or companies.

.png?width=1200&height=628&name=Copy%20of%20Facebook%20Shared%20Link%20-%201200x628%20-%20Percentage%20+%20Copy%20-%20Dark%20(5).png)

8. Engage with your audience.

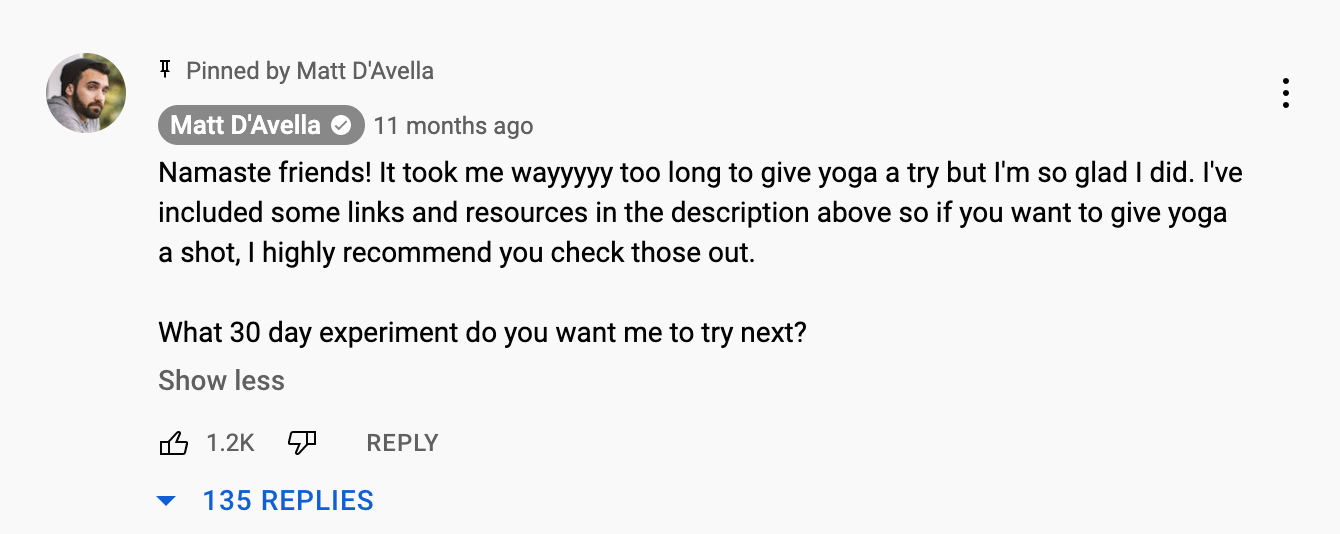

When your followers leave a question or comment on your posts, take the time to acknowledge and respond to them. That can make them feel valued and that you sincerely want to help them. It will also help you develop a relationship with them.

Of course, not all of the comments and questions will be positive. As an influencer, expect that you’ll have your fair share of negative comments and criticisms. Make it a point to keep your cool and address them professionally.

9. Network with other influencers.

Collaborate with other influencers in your niche to expand your audience and grow your network. You can find potential collaborators through social media, online communities, or by attending conventions and vents.

Having business cards to pass to potential collaborators also doesn’t hurt.

10. Create a media kit and pitch yourself to brands.

A media kit is an influencer version of a resume or portfolio. An influencer media kit contains information about your work, successes, audience size, and why brands should work with you.

Every influencer should have a media kit to email to marketing professionals, brand representatives, and agencies to find work.

The kit’s design is just as important as its content because you’ll want a design showcasing your personality because personality is key.

Media kits also make you look more professional. Many people step into influencing and content creation as a hobby. Having a media kit shows companies you are not a hobbyist and are serious about your work.

Your kit should include the following:

- Your photo

- A short bio

- Your social media channels, along with your follower count on each platform

- Engagement rate

- Audience demographics

- Website link

- Information about past work and collaborations

You can design a media kit using Canva or purchase media kit templates from Etsy. You can also download media kit templates from HubSpot by clicking here.

11. Be consistent.

Your followers need to be able to consistently count on you to deliver quality content. If you don’t, they’ll eventually stop following you or at least paying attention to you.

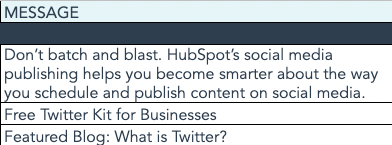

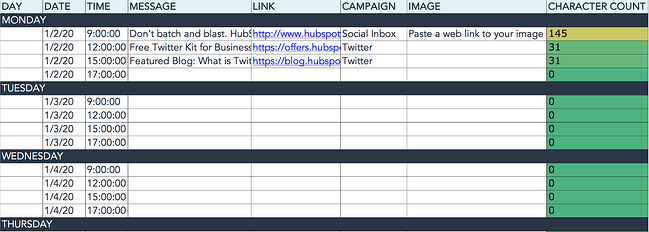

Scheduling your posts using a social automation tool like eClincher or HubSpot’s social publishing tools can help ensure you stay consistent with your posts.

Instead of manually publishing on each of your social media profiles, these tools allow you to create, upload, and schedule posts in batches.

12. Track your progress.

This step is crucial, especially if you’re looking to collaborate with brands for their influencer marketing campaigns, since this is one of the things brands look for in an influencer to partner with.

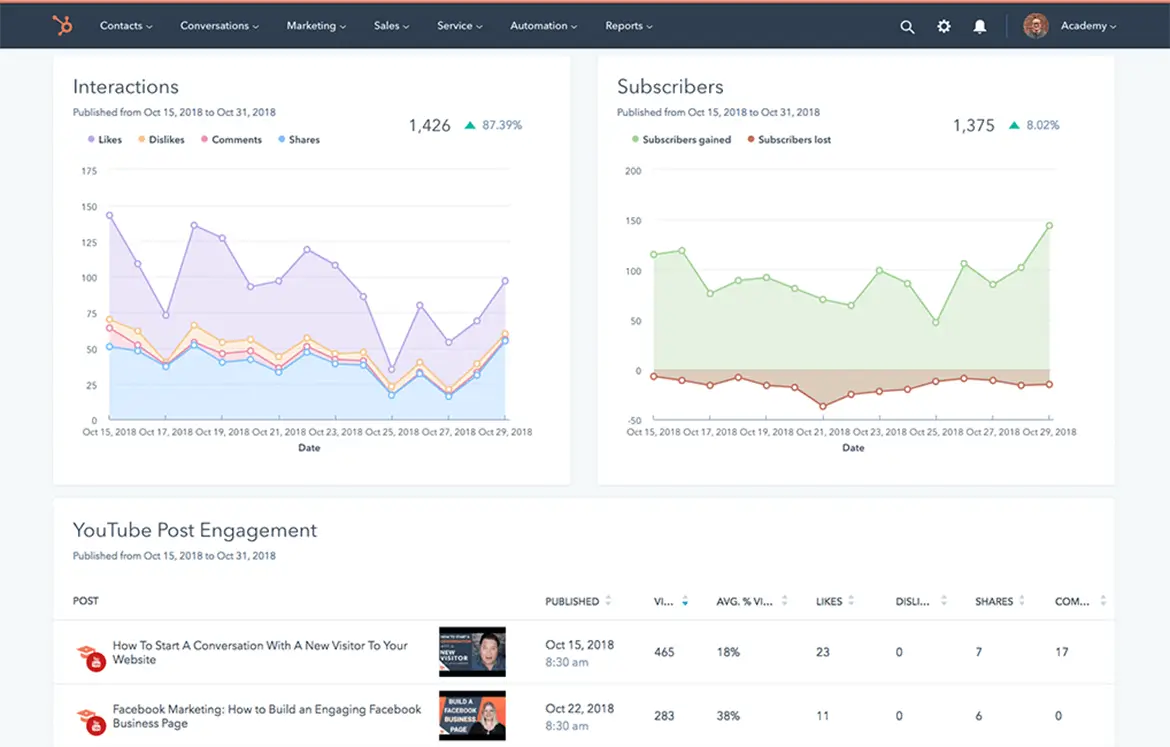

Most social media channels give you insights and analytics to monitor your progress — things like demographics, reach, and engagement rate that will show how quickly (or slowly) you’re building your audience.

It will also shed light on which content formats get the highest engagement rates so that you can create more.

How to Become an Influencer on Social Media

The steps above are all applicable to becoming a social media influencer. Some additional tips to keep in mind are:

1. Build an online community around your content.

Building trust with your audience is critical to your success as an influencer. One way to build trust is to build a community around your content.

Create a space where your audience can ask questions, engage with your content, and find others who enjoy your work or niche.

Some influencers start communities on Discord, Reddit, or other platforms to speak candidly with their followers. You can also host live Q&As or start your own hashtag for your followers to use to connect.

2. Repurpose content as necessary.

Fresh and interesting content should always be the priority when influencing, but sometimes it helps to repurpose content.

Repurposing content is especially helpful when you’re pressed for time, lacking fresh ideas, or just need to post something to keep on schedule.

You can also repurpose content to give your posts a second life on other platforms. If you have an Instagram Reel that performed well but could use more eyes on it — repost it to TikTok or YouTube Shorts.

For more ways to repurpose content, click here.

3. Always be willing to learn and be open to new platforms.

As I mentioned earlier, social media platforms often fall in and out of favor with audiences, so always be ready to pivot when a platform is losing steam.

Keep an eye out for up-and-coming social channels, and always keep a pulse on where your audience is tuning in.

Ultimately, to be a successful influencers you need to be authentic, organized, flexible, and willing to adjust to evolving trends.

And of course, you need to create quality content that shows brands and your followers that you are serious about your work. Now that you know the steps you need to take, you’re ready to dive into the influencer market.

![]()

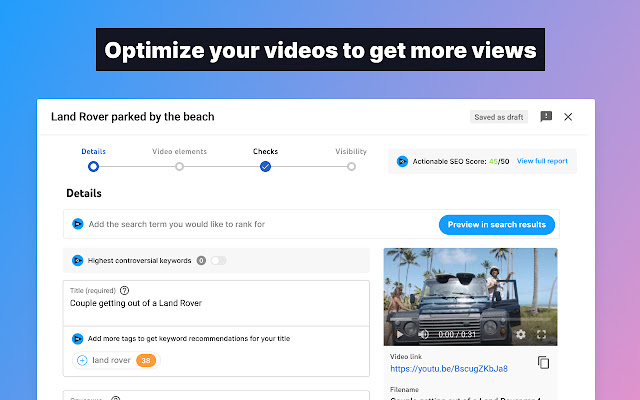

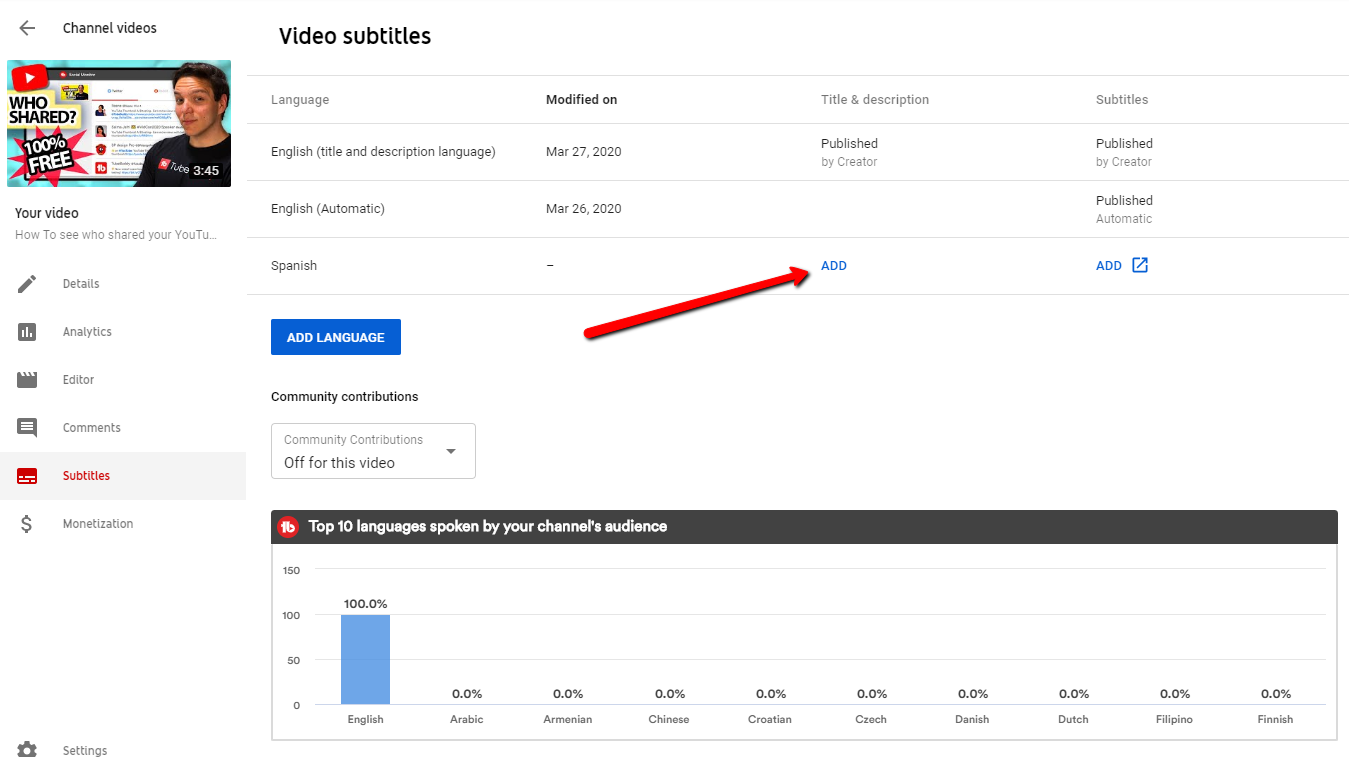

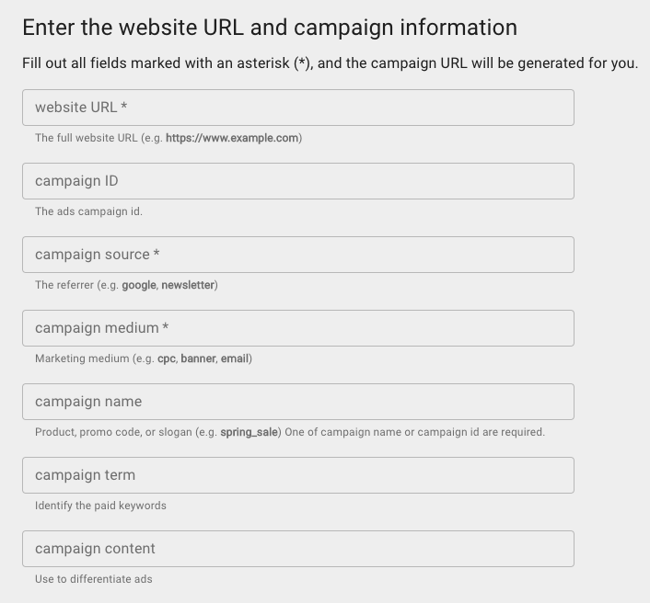

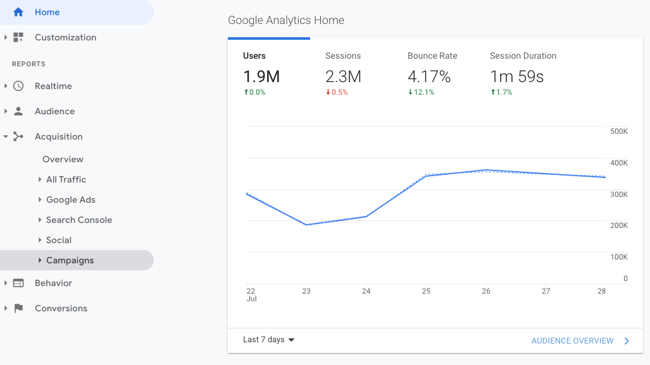

YouTube SEO: How to Optimize Videos for YouTube Search

How does YouTube SEO work? What are the steps to optimize your YouTube videos for search? The answers to these questions are simpler than you might think.

While it might seem difficult to get any exposure on YouTube, you can implement certain strategies to ensure that the YouTube algorithm favors you in the search results.

In this post, we’ll go over proven YouTube SEO tips that have worked for HubSpot’s YouTube channel and that will work for you, regardless of your channel size. Let’s get started.

How to Rank Videos on YouTube

To get videos to rank on YouTube, we must first understand the YouTube algorithm and YouTube’s ranking factors.

Just like any search engine, YouTube wants to deliver content that answers the searcher’s specific query. For instance, if someone searches for “how to tie a tie,” YouTube won’t deliver a video titled “how to tie your shoelaces.” Instead, it will serve search results that answer that specific query.

So, as you try your hand at YouTube SEO, think about how you can incorporate terms and phrases that are used by your target audience.

You’ll also need to think about YouTube analytics and engagement. When it ranks videos, YouTube cares about a metric called “watch time” — in other words, how long viewers stay on your video. A long watch time means that you’re delivering valuable content; a short watch time means that your content should likely not rank.

If you want your videos to rank, try to create content that’s optimized for longer watch times. You can, for instance, prompt users to stay until the end of the video by promising a surprise or a giveaway.

Is it worth optimizing videos on YouTube?

Trying to rank videos on YouTube might seem like a lost effort. Only the most well-known influencers and content creators seem to have any luck on the platform.

However, that’s not the case. As a business, you can enjoy views, comments, and likes on your videos — so long as you find the right audience for your content. In fact, finding and targeting the right audience is even more important than creating a “beautiful” video. If you’re actively solving your prospective customers’ problems with your YouTube videos, then you’ve done 90% of the YouTube optimization work.

In addition, ranking videos on YouTube is a key element of your inbound marketing strategy, even if it might not seem that way. As recently as a decade ago, inbound video marketing was a brand new idea. Marketers were learning that they couldn’t just publish a high volume of content — it also had to be high-quality and optimized in ways that made it as discoverable as possible through search engines.

That content was once largely limited to the written word. Today, that’s no longer the case. Instead, a comprehensive content strategy includes written work like blogs and ebooks, as well as media like podcasts, visual assets, and videos. And with the rise of other content formats comes the need to optimize them for search. One increasingly important place to do that is on YouTube.

If you’re feeling lost, don’t worry. We cover the most important YouTube SEO tips and strategy below so you can effectively optimize your content for YouTube search.

YouTube SEO combines basic SEO practices with YouTube-specific optimization techniques. If you’re new to search engine optimization, check out this complete SEO guide.

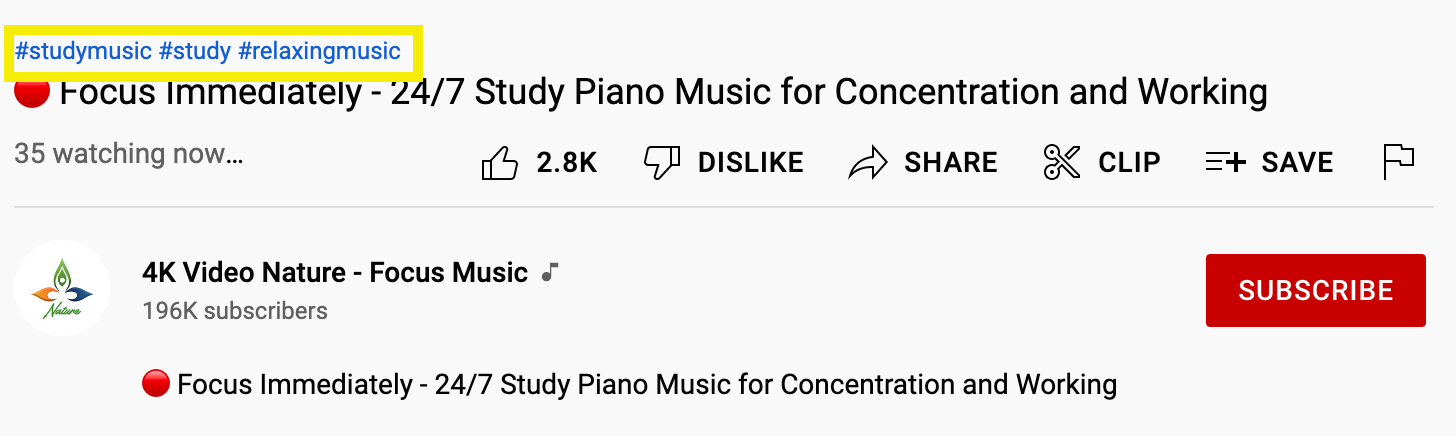

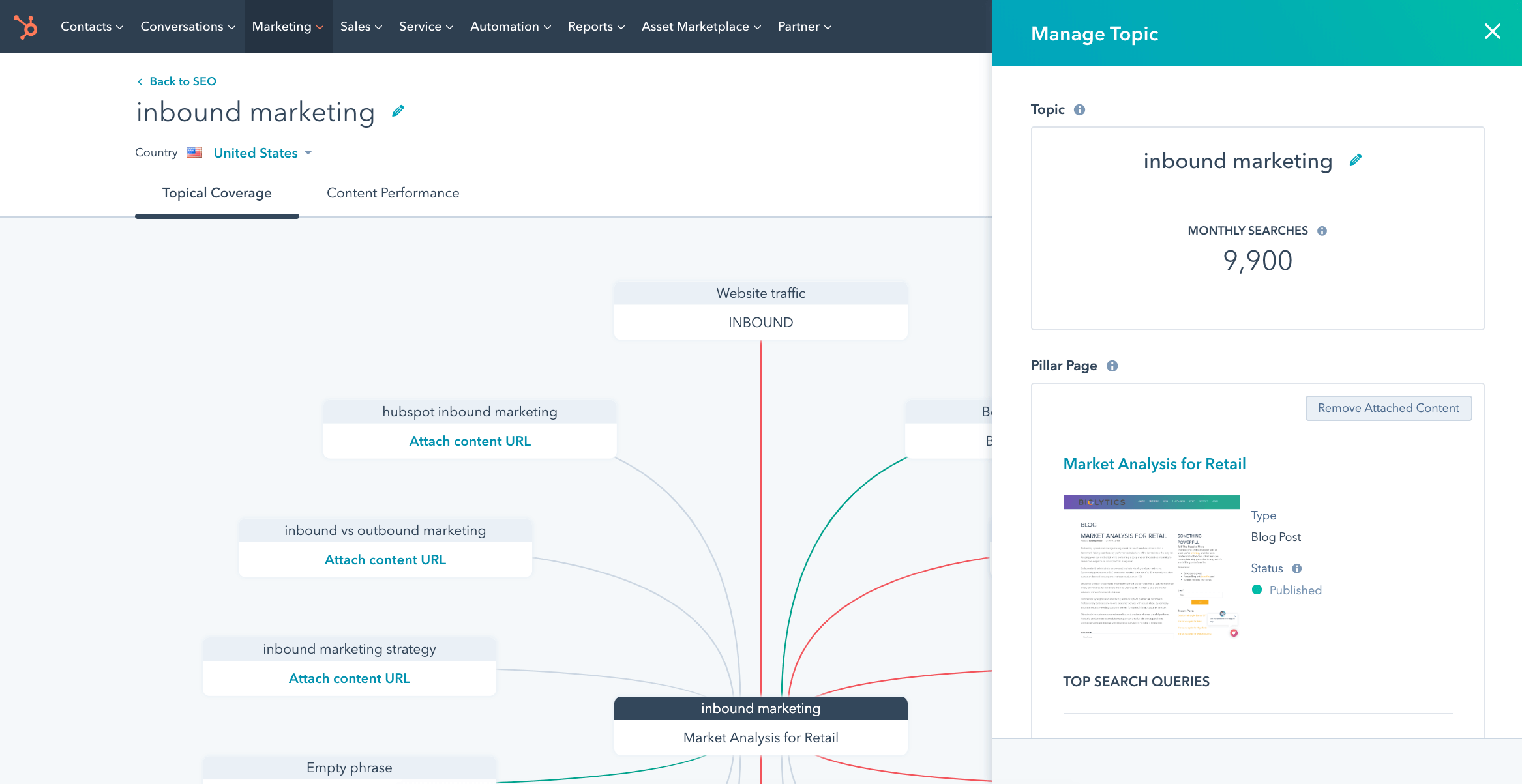

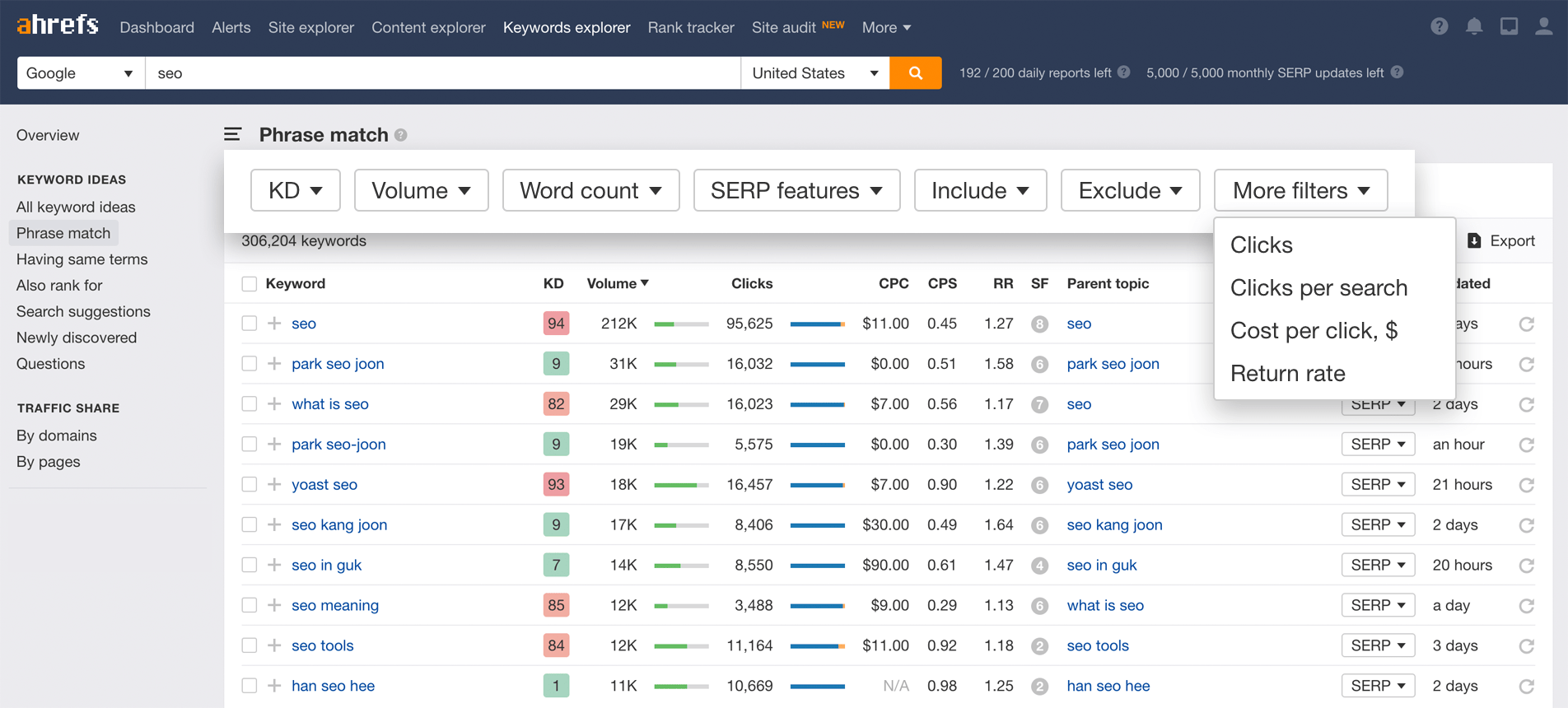

YouTube Strategy